Neuro-Symbolic Generation of Explanations for Robot Policies with Weighted Signal Temporal Logic

IEEE Robotics & Automation Letters (RA-L), The 2026 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), The 2025 American Control Conference (ACC)

2026

1University of Illinois Urbana-Champaign

Abstract

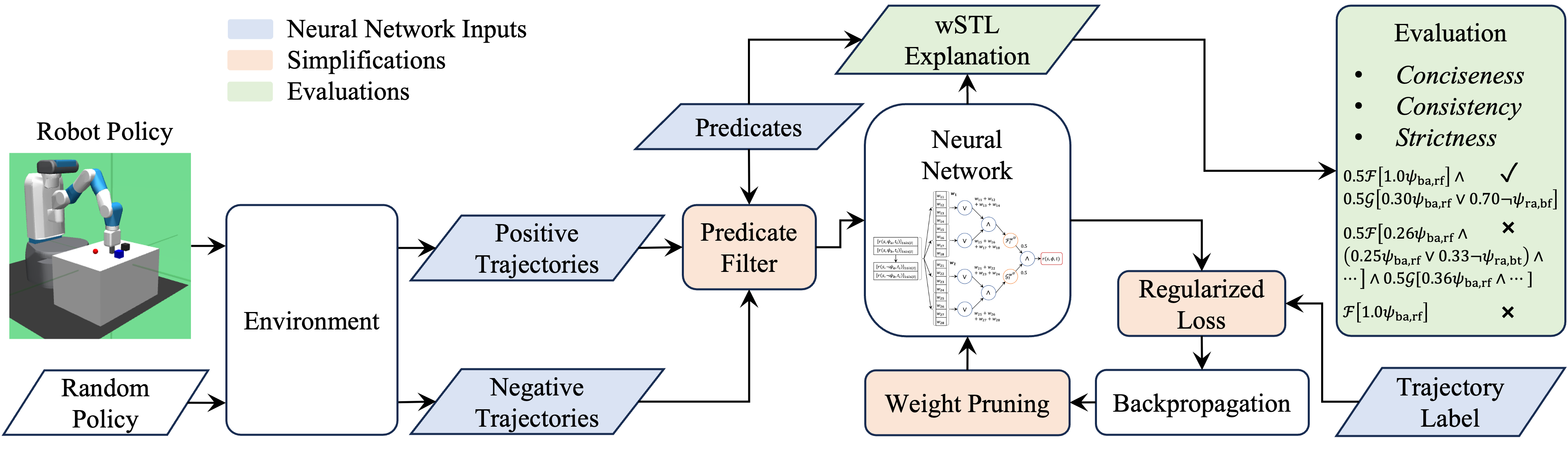

Learning-based policies have demonstrated success in many robotic applications, but often lack explainability. We propose a neuro-symbolic explanation framework that generates a weighted signal temporal logic (wSTL) specification which describes a robot policy in a human-interpretable form. Existing methods typically produce explanations that are verbose and inconsistent, which hinders explainability, and are loose, which limits meaningful insights. We address these issues by introducing a simplification process consisting of predicate filtering, regularization, and iterative pruning. We also introduce three explainability metrics—conciseness, consistency, and strictness—to assess explanation quality beyond conventional classification accuracy. Our method—TLNet—is validated in three simulated robotic environments, where it outperforms baselines in generating concise, consistent, and strict wSTL explanations without sacrificing accuracy. This work bridges policy learning and explainability through formal methods, contributing to more transparent decision-making in robotics.

Poster

Citation

@article{yuasa_neurosymbolic_2026,

author={Yuasa, Mikihisa and Sreenivas, Ramavarapu S. and Tran, Huy T.},

journal={IEEE Robotics and Automation Letters},

title={Neuro-Symbolic Generation of Explanations for Robot Policies With Weighted Signal Temporal Logic},

year={2026},

volume={},

number={},

pages={1-8},

keywords={Robots;Neural networks;Trajectory;Accuracy;Logic;Robustness;Measurement;Training;Syntactics;Network architecture;Formal Methods in Robotics and Automation;Reinforcement Learning;Deep Learning Methods;Human-Centered Robotics},

doi={10.1109/LRA.2026.3662977}}